For a while the OpenAI 'ouster' was the top story on the New York Times home page, and they devoted considerable ongoing coverage to it. Given the impact of ChatGPT and the commercial, social and policy pervasiveness of AI it was big news. And even if things have settled down again, it has changed perceptions of the organization and kept concerns about AI in the news. Here are some thoughts about what has happened, with some notes about library connections and impacts. I include a short coda on environmental analysis in the library community.

The future is the future

Writing about libraries and AI recently, I noted that the situation was unpredictable. How true that turned out to be! I was thinking about how social practices and their material base in technology interact unpredictably (think of the selfie, then Instagram, then the impact on travel or the baleful influence on mental wellness, for example). However, two things jumped out at me over the last few weeks.

First is the impact of personalities and politics on organizational direction and values. Quite often this may be hidden from us, or go undocumented in the public record. And while we do not know exactly what happened, clearly personal dynamics were at play here. This was at various levels. In the Board interactions. In the relationship between the Board and senior staff. In the staff responses at OpenAI. In the personal and business network of Sam Altman. And so on.

We have seen another extraordinary recent example of personality at play in the events surrounding the acquisition and development of Twitter, and the destruction of value that has followed. This may be unusual and exceptionally visible, but it does show how things can go in unanticipated directions.

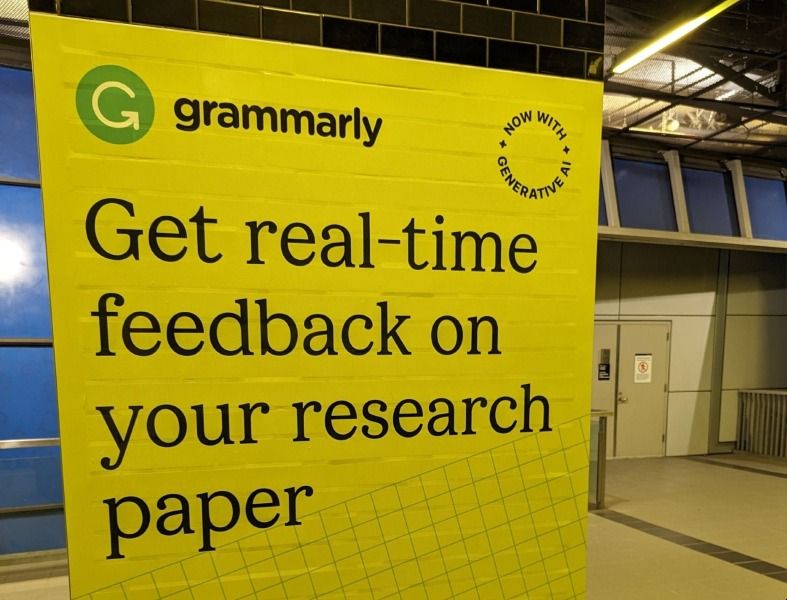

Second is some of the technical direction. I have observed how OpenAI was reportedly surprised at the success of ChatGPT. It was interesting to see this confirmed in the extensive background reporting around the events. Internally, the launch of ChatGPT was referred to as a low-key research preview and was not expected to attract very much notice. Of course, things turned out very differently, and the ChatGPT launch is now seen as a significant inflection point, on a par with the introduction of the graphical web browser or the iPhone, which has led to major investment, experimentation and impact.

After things settled down, OpenAI employees used DALL-E, the company’s A.I. image generator, to make a laptop sticker labeled “Low key research preview.” It showed a computer about to be consumed by flames. // NYT

What is the narrative?

The dominant narrative to emerge in the commentary was about the balance of existential risk and extreme reward.

The ouster on Friday of Mr. Altman, 38, drew attention to a longtime rift in the A.I. community between people who believe A.I. is the biggest business opportunity in a generation and others who worry that moving too fast could be dangerous. And the vote to remove him showed how a philosophical movement devoted to the fear of A.I. had become an unavoidable part of tech culture. // New York Times

This binary contrast between commercial opportunity, often coupled with a techno-optimism about impact, and the strong societal risk posed by unstable or out of control systems seems to deflect attention from critical engagement with current deployment. This narrative is supported in some ways by the continued stated goal of OpenAI to achieve AGI (Artificial General Intelligence) in a responsible way, as a focus on AGI moves the conversation to an undefined future state and away from current business and service choices.

This general media framing of the current state of AI tended to bypass the factors accompanying the broad rollout of these technologies, sometimes preferring speculation about a mysterious Q* initiative. Meanwhile, the rapid spread of AI continues across sectors, across startups and established businesses, and across the scholarly and cultural domains. Informed choices and use, responsible deployment, policy and ethical frameworks: these are all now central questions in many contexts. The drama narrative pushed aside concerns around some of these current issues and possible remediations (to do with composition of training data, for example, or documentation, hidden labor, mitigation of bias, and so on). It also blurs the real differences among actually existing services in terms of training data, business models, reuse of data, and so on.

This is important ground for libraries to occupy - to advocate, advise and to make informed decisions about AI that is actually existing and increasingly in operation (AI-AE-IO). Libraries, and the organizations that represent them, are well placed to understand and represent public, scholarly and cultural interests. AI is not an abstract entity: it is delivered in particular products and services, which incorporate decisions about how they are designed, built and operate. What data is used or collected? What behaviors can you expect? And so on. This was more fully discussed in my post on library contexts.

Board and governance

The complicated nested governance model of OpenAI has been much referenced, where a not-for-profit dedicated to safe deployment has a for-profit subsidiary, with a 'capped profit' model. The Board's fiduciary responsibilities to society are laid out in the documentation.

We initially believed a 501(c)(3) would be the most effective vehicle to direct the development of safe and broadly beneficial AGI while remaining unencumbered by profit incentives. We committed to publishing our research and data in cases where we felt it was safe to do so and would benefit the public.

They set up a for-profit subsidiary capable of raising capital to fund development at the required level. Limits were placed on what individuals or investors could take out of the company.

The for-profit’s equity structure would have caps that limit the maximum financial returns to investors and employees to incentivize them to research, develop, and deploy AGI in a way that balances commerciality with safety and sustainability, rather than focusing on pure profit-maximization.

Some felt the incentives are so misaligned here that it was inherently unstable. And it seems that at this stage that the for-profit incentives are driving the organization, within an overall stated commitment to responsible development. However, what is surprising is that the Board took action at such a late stage given the pace of developments. And also that all parties allowed things to arrive at this apparent breakdown. This is especially the case given what appear to be major philosophical differences between the Board and Altman.

The intervention seemed too late in the game when so much was at stake, to Microsoft, for sure, given the scale of their investment, but also to the whole ecosystem that has come to depend on OpenAI. OpenAI had become too important to too many stakeholders – in terms of both investment and use – for those involved not to find a vehicle in which to continue. So, admittedly with hindsight, the outcome is not too surprising, even if it ran through a hectic unpredictable course. It will be interesting to see what changes in governance now evolve as the very different new board settles in.

From a library point of view, I think this highlights the importance of governance, and underlines board responsibilities as fiduciaries. This is especially important in a community which relies so much on non-profit and collaborative organizations for advancing its aims. It is tempting to think of boards as advisory committees, or as career accelerators. However, board members have an important responsibility for thinking about the vitality of organizations, and by extension of the library community. And also for thinking about which organizations are useful and how existing organizations can work together. This may involve difficult decisions.

Board members act as trustees of the organization’s assets and must exercise due diligence and oversight to ensure that the organization is well-managed and that its financial situation remains sound. // Board Source

Concentration of power and reward

Microsoft, and accomplished media communicator Satya Nadella, moved quickly and, with other investors, were clearly major players in the flurry of discussions after the ouster. While Microsoft did not have a board seat, it had strong influence through the scale of its investment, which was partly structured as infrastructure support and rights to OpenAI intellectual property. In interviews, Nadella emphasized the close operational integration between the companies and their shared technical leadership. In this way, it appears Microsoft was well placed to pick up or to continue with developments itself, even if OpenAI were to go in another direction.

The strength of the reaction to the news was a signal of how important OpenAI had become in financial terms, but also in service terms. Many organizations have built services on top of its APIs or used it in other ways. And while systems might be engineered to swap in a difference language model service at the backend, there is effort involved, and GPT-4 currently leads in terms of usage, functionality and ongoing development.

The outcome seems to have confirmed OpenAI as the clear leader in commercial AI development. Even if it has exposed some issues and raised questions about organization, leadership and direction. The organization has had to slow down its subscription business given issues handling volume of demand. Its close relationship with Microsoft is also now in clear view.

Google has recently released its Gemini model in various versions, and has swung the company behind a push to integrate AI, to provide AI services, and not to let the advantage go elsewhere. It has seen how its core search business is potentially vulnerable.

OpenAI/Microsoft and Google are the dominant players, and have made massive investments to position themselves as AI leaders. We seem to be witnessing the same network dynamic we have seen since the web first began. At that time there was great creativity and innovation diffused throughout many organizations on the network. However, the power of network effects created a small number of dominant players in near monopoly positions. Think of Google, Amazon, Facebook. Things may change (look at Twitter again) but we see this dynamic again and again.

It is clear that Microsoft and Google are determined not to be displaced by new entrants, and they want to take a large share of what is seen as an inevitable AI bounty. Microsoft's position is deeply connected to OpenAI, and protecting and building on that lead is part of the recent dynamic. (See the HuggingFace ratings of Chatbots.)

Amazon, Nvidia and a variety of others are also important. For example Anthropic (set up by a group which left OpenAI earlier, concerned about safety issues), Cohere, and others are now important players in providing AI-based infrastructure and services. There is strong interest in new French entrant, Mistral, which recently released an open source model capable of running on personal machines. There are many, many others. A service like perplexity.ai shows the potential threat to Google's search business, and one wonders whether it will be bought by one of the bigger players.

Now, as I noted, things are also very unpredictable. And there certainly are robust open source and other alternatives. Will they remain niche? Or become central players also? Are there other players who will step in? And I haven't said anything about China. For the moment, however, it would seem that Microsoft/OpenAI and Google are set to be the major players in a crowded field for a while yet.

It is important that libraries understand some of these market dynamics, but also look beyond the dominant players where it makes sense given the rich ecosystem that is emerging. For example, there are some important initiatives in cultural or scholarly domains for specialist purposes, a range of focused applications, a developing set of open source models, and so on.

Research-forward?

The boundary between the academy and AI companies has been very porous, and some major players move between the two. The OpenAI Chief Scientist, Ilya Sutskever, who was on the Board which dismissed Altman had worked with Geoffrey Hinton at Toronto.

I was struck by this piece underlining a division between product and research, or between an industrial perspective and an academic one. This is related to the reward vs risk dynamic, but emphasizes another dimension.

One thinks AGI is better achieved by deploying products iteratively, raising money, and building more powerful computers — what we could call the industrial/business approach. The industrial approach group includes Altman and Brockman, who have championed the idea of iterative deployment in public the most. The other group, comprised of those who executed the coup against them, including Chief Scientist Ilya Sutskever, thinks AGI is better achieved by conducting deeper R&D on things like interpretability and alignment and devoting fewer resources to making consumer-oriented AI products — what we could call the academic approach. // The Algorithmic Bridge

Clearly, I do not know how strong this dynamic was, but it seems at least somewhat plausible.

What this whole episode does seem to signal is the emergence of the industrial approach as a dominant shaper. The commercial interests seem to be driving OpenAI (and certainly drive Microsoft). And clearly Google's focus is to counter the existential competitive threat that AI offers to its core business.

The competition for scarce talent is intense, with very high salaries being reported. It was interesting to see high profile industry figures (e.g. Marc Benioff at Salesforce) publicly offer jobs to OpenAI employees. In a fast moving field, the connection between industrial R&D and work in the academy will remain strong, as will the competition for scarce skills. But AI has very quickly been industrialized and entered a new phase.

Acting in public

This was big news and many media resources and commentators were focused on it. Nevertheless, it was interesting to see how much was known so quickly, and how closely developments were tracked and reported. And, no doubt some of the actors were keen to use the media and social media to advance positions or apply pressure.

Again, the parallel with Twitter is interesting. In each case, there is this twin dynamic at play. We know much more in almost real time about what is happening, and we also know that various of the players are strategically controlling the selective release of information.

It is interesting to see this evolution. Major players are news makers in that they are the subject of news attention. They are also news makers in that they now have direct access to news platforms themselves.

Of course, we do not know what we don't know.

Coda

One doesn't expect all librarians to follow the ins and outs of the AI industry. Or indeed of the publishing industry, especially now with all the movement around the varieties of open access, workflow solutions, acquisitions, and other influences. It can even be difficult keeping track of the for-profit and non-profit suppliers in the library community.

However, if one wants to track the AI industry and R&D developments there is a lot of material available. It has been interesting to see the range of analysis and commentary around the OpenAI question. This is not too surprising given the scale of the social and business interests at play. There is some commentary and analysis around publishing, although it a challenge to keep abreast of the variety of OA models and directions. There is less on library infrastructure and services.

It does again prompt me to wonder about why we don't see more cumulative critical analysis in our own community. I am thinking about how we source and sustain critical infrastructure and services, about industry trends, about mergers and acquisitions, about the variety and relationship between community initiatives, and so on.

What ongoing conversation, analysis, market intelligence or critical journalism is available to the library director wanting to make a choice about investment, collaborative arrangements or procurement? What comparisons or case studies are available to the non-profit board member thinking about their future in an evolving service landscape?

Of course, there is some useful work from several sources. I was also quite interested in how SPARC has commissioned the type of analysis it would like to see. In aggregate however we are not strong on the commentary and analysis that more directly informs decision-making or influences policy.

There is certainly a scale issue; it is not a very large well-resourced community. Is library journalism and related activity overly beholden to advertising or sponsorship interests? I wonder is the library community more or less willing to pay for market intelligence than the K12 education sector, say, or other social sectors? Or indeed than their parent municipalities or colleges? Or maybe there is more library interest in resources outside the library community, on public policy, technology, education, urban affairs? Or maybe the high social capital created through rich personal and institutional interactions within professional associations and consortia helps reduce uncertainty and increase confidence in planning?

Or maybe I am suggesting an issue that does not exist!? Something to return to.

Acknowledgements: I am grateful to Roger Schonfeld who generously commented on a draft.

Incidentally, Roger has written about some of the issues touched on in the Coda here. A reference there reminds me of the piece Scott Walter and I wrote about how the library community might benefit from what we called a platform publication, a common point of reference which aggregates attention and provides a platform for engaging a large part of the community (compare Educause Review or Communications of the ACM for example).

Picture: The feature picture is from the chess collection at Cleveland Public Library.

Related posts: See my series of longer posts for a fuller discussion of AI. I plan a fourth in the series at some stage looking at potential impact on library services.

Update: 12/17/2023 minor editorial adjustments.